- 本地安装教程

- Setup: Download and Start Flink

- Start a Local Flink Cluster

- Read the Code

- Run the Example

- Next Steps

- Setup: Download and Start Flink

本地安装教程

Get a Flink example program up and running in a few simple steps.

Setup: Download and Start Flink

Flink runs on Linux, Mac OS X, and Windows. To be able to run Flink, the only requirement is to have a working Java 8.x installation. Windows users, please take a look at the Flink on Windows guide which describes how to run Flink on Windows for local setups.

You can check the correct installation of Java by issuing the following command:

java -version

If you have Java 8, the output will look something like this:

java version "1.8.0_111"Java(TM) SE Runtime Environment (build 1.8.0_111-b14)Java HotSpot(TM) 64-Bit Server VM (build 25.111-b14, mixed mode)

- Download a binary from the downloads page. You can pickany Scala variant you like. For certain features you may also have to download one of the pre-bundled Hadoop jarsand place them into the

/libdirectory. - Go to the download directory.

- Unpack the downloaded archive.

$ cd ~/Downloads # Go to download directory$ tar xzf flink-*.tgz # Unpack the downloaded archive$ cd flink-1.9.0

For MacOS X users, Flink can be installed through Homebrew.

$ brew install apache-flink...$ flink --versionVersion: 1.2.0, Commit ID: 1c659cf

Start a Local Flink Cluster

$ ./bin/start-cluster.sh # Start Flink

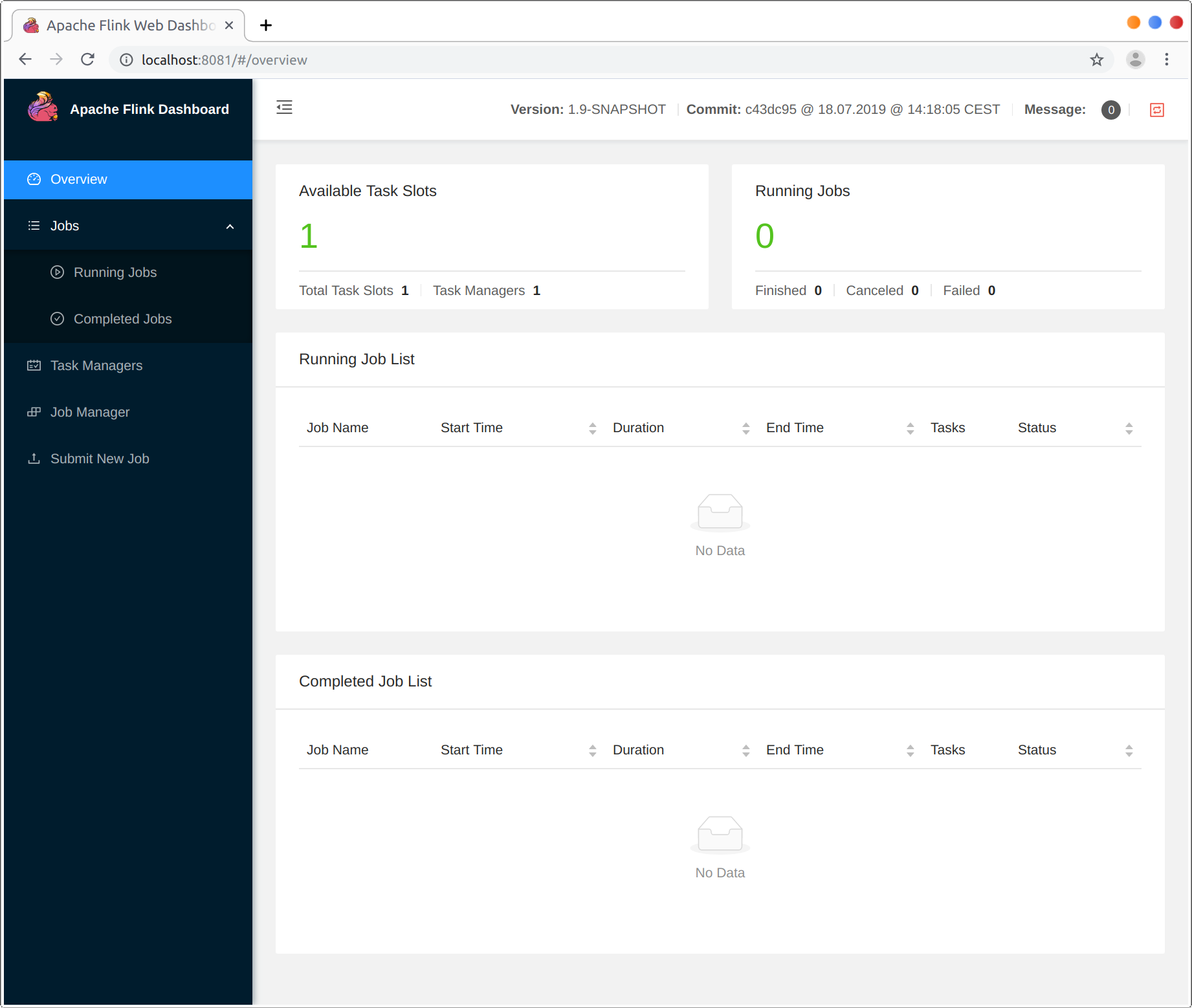

Check the Dispatcher’s web frontend at http://localhost:8081 and make sure everything is up and running. The web frontend should report a single available TaskManager instance.

You can also verify that the system is running by checking the log files in the logs directory:

$ tail log/flink-*-standalonesession-*.logINFO ... - Rest endpoint listening at localhost:8081INFO ... - http://localhost:8081 was granted leadership ...INFO ... - Web frontend listening at http://localhost:8081.INFO ... - Starting RPC endpoint for StandaloneResourceManager at akka://flink/user/resourcemanager .INFO ... - Starting RPC endpoint for StandaloneDispatcher at akka://flink/user/dispatcher .INFO ... - ResourceManager akka.tcp://flink@localhost:6123/user/resourcemanager was granted leadership ...INFO ... - Starting the SlotManager.INFO ... - Dispatcher akka.tcp://flink@localhost:6123/user/dispatcher was granted leadership ...INFO ... - Recovering all persisted jobs.INFO ... - Registering TaskManager ... at ResourceManager

Read the Code

You can find the complete source code for this SocketWindowWordCount example in scala and java on GitHub.

object SocketWindowWordCount {def main(args: Array[String]) : Unit = {// the port to connect toval port: Int = try {ParameterTool.fromArgs(args).getInt("port")} catch {case e: Exception => {System.err.println("No port specified. Please run 'SocketWindowWordCount --port <port>'")return}}// get the execution environmentval env: StreamExecutionEnvironment = StreamExecutionEnvironment.getExecutionEnvironment// get input data by connecting to the socketval text = env.socketTextStream("localhost", port, '\n')// parse the data, group it, window it, and aggregate the countsval windowCounts = text.flatMap { w => w.split("\\s") }.map { w => WordWithCount(w, 1) }.keyBy("word").timeWindow(Time.seconds(5), Time.seconds(1)).sum("count")// print the results with a single thread, rather than in parallelwindowCounts.print().setParallelism(1)env.execute("Socket Window WordCount")}// Data type for words with countcase class WordWithCount(word: String, count: Long)}

public class SocketWindowWordCount {public static void main(String[] args) throws Exception {// the port to connect tofinal int port;try {final ParameterTool params = ParameterTool.fromArgs(args);port = params.getInt("port");} catch (Exception e) {System.err.println("No port specified. Please run 'SocketWindowWordCount --port <port>'");return;}// get the execution environmentfinal StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();// get input data by connecting to the socketDataStream<String> text = env.socketTextStream("localhost", port, "\n");// parse the data, group it, window it, and aggregate the countsDataStream<WordWithCount> windowCounts = text.flatMap(new FlatMapFunction<String, WordWithCount>() {@Overridepublic void flatMap(String value, Collector<WordWithCount> out) {for (String word : value.split("\\s")) {out.collect(new WordWithCount(word, 1L));}}}).keyBy("word").timeWindow(Time.seconds(5), Time.seconds(1)).reduce(new ReduceFunction<WordWithCount>() {@Overridepublic WordWithCount reduce(WordWithCount a, WordWithCount b) {return new WordWithCount(a.word, a.count + b.count);}});// print the results with a single thread, rather than in parallelwindowCounts.print().setParallelism(1);env.execute("Socket Window WordCount");}// Data type for words with countpublic static class WordWithCount {public String word;public long count;public WordWithCount() {}public WordWithCount(String word, long count) {this.word = word;this.count = count;}@Overridepublic String toString() {return word + " : " + count;}}}

Run the Example

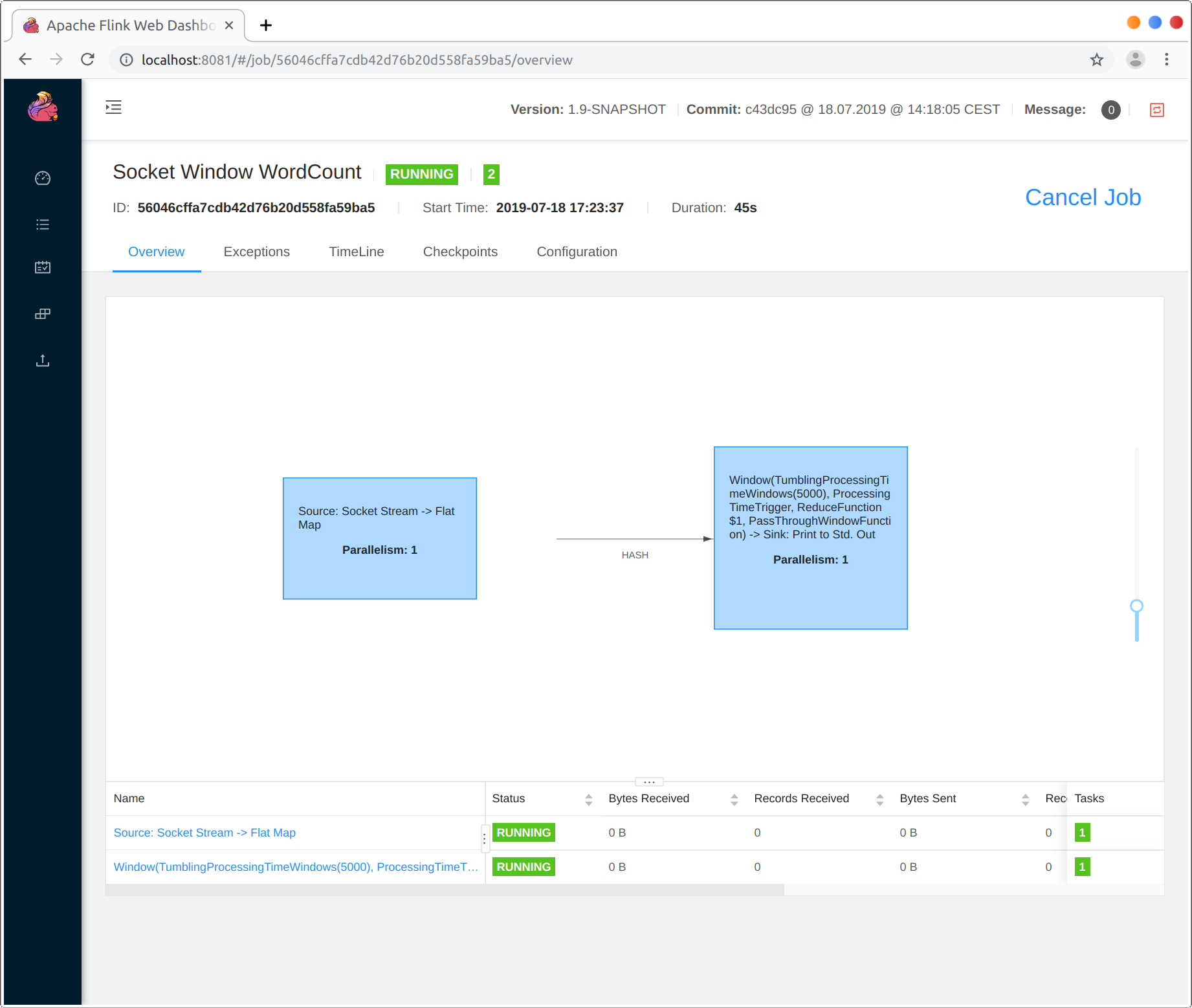

Now, we are going to run this Flink application. It will read text froma socket and once every 5 seconds print the number of occurrences ofeach distinct word during the previous 5 seconds, i.e. a tumblingwindow of processing time, as long as words are floating in.

- First of all, we use netcat to start local server via

$ nc -l 9000

- Submit the Flink program:

$ ./bin/flink run examples/streaming/SocketWindowWordCount.jar --port 9000Starting execution of program

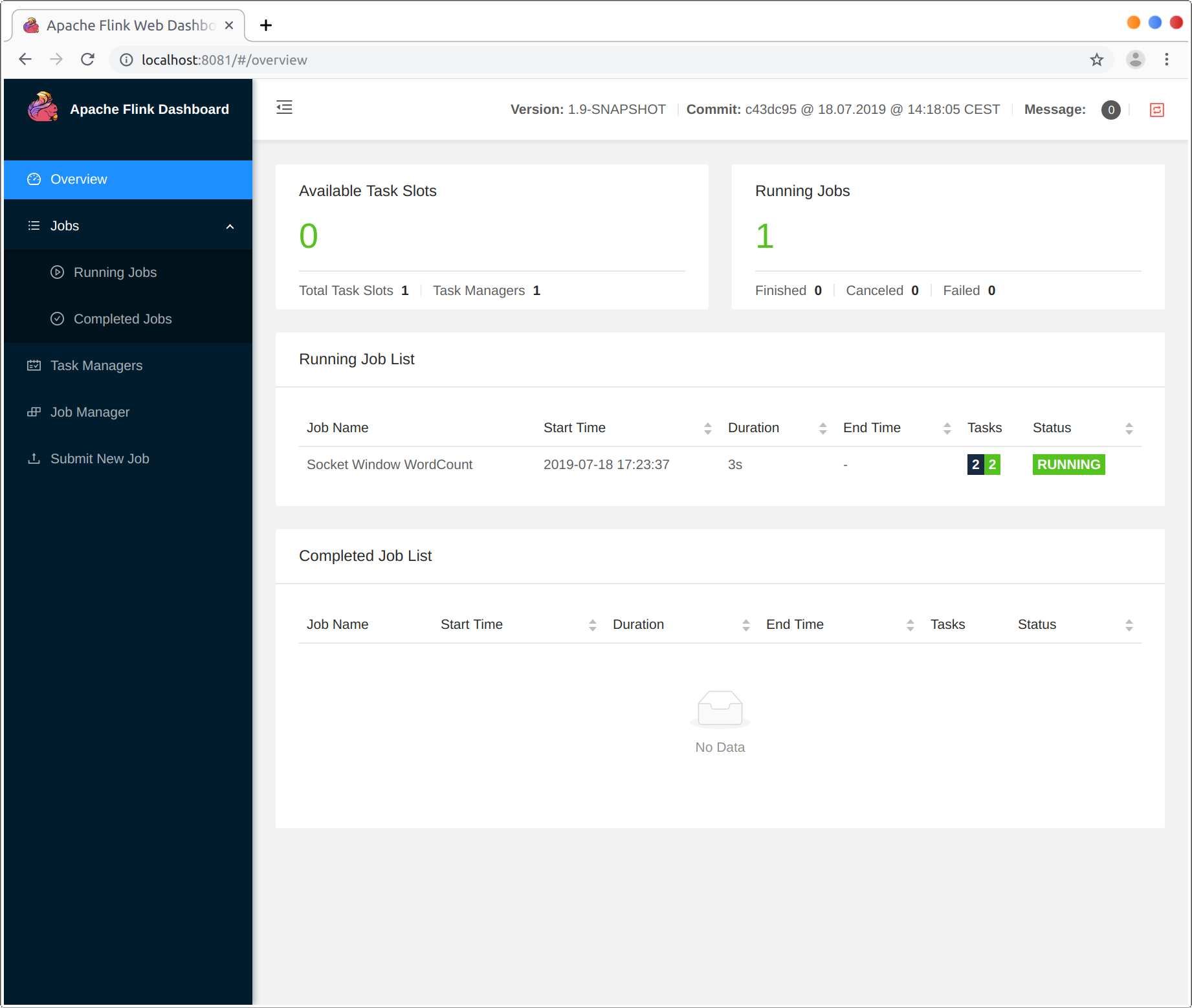

The program connects to the socket and waits for input. You can check the web interface to verify that the job is running as expected:

- Words are counted in time windows of 5 seconds (processing time, tumblingwindows) and are printed to

stdout. Monitor the TaskManager’s output fileand write some text innc(input is sent to Flink line by line afterhitting ):

$ nc -l 9000lorem ipsumipsum ipsum ipsumbye

The .out file will print the counts at the end of each time window as long as words are floating in, e.g.:

$ tail -f log/flink-*-taskexecutor-*.outlorem : 1bye : 1ipsum : 4

To stop Flink when you’re done type:

$ ./bin/stop-cluster.sh

Next Steps

Check out some more examples to get a better feel for Flink’s programming APIs. When you are done with that, go ahead and read the streaming guide.